Note: This same article appears in my median.com account as well and possibly on researchgate.com as well

#Research_Excercise #ReseachProject #AI-Excercise

This article provides the algorithm for feature selection using Soft Rough Sets called Soft Quick Reduct. These techniques are based on Soft Rough Sets, a new technique to study the relationship in smaller datasets. The algorithms are not yet fully verified over data. If in course of time, we implement it we shall provide results, otherwise, you can continue its research with your results. Here, we just provide the outlines.

#Research_Excercise #ReseachProject

1. Introduction

Given the huge amount of data that is present these days in data warehouses and data centers. It is eminent that certain data be reduced to smaller forms to be understood by classical algorithms, not be just sent to deep learning methods. This process in which data is reduced to smaller dimensions but with the same or similar classification ability comes under feature reduction or feature selection algorithms. Feature selection comes in the branch of reduction of features or the columns that define the data. These are characteristics defining the data, and often not all characteristics are needed while processing the data. For example, some data characteristics may be dependent on each other and at other times some data characteristics may be dependent on other features, fully or partially. If they are partially dependent features, the dependency degree can be computed to know, if it is worth dropping it out of the data which is to be analyzed at the next levels. This selection of important features and dropping out of nonessential features is called feature selection. Feature selection can be performed on in various ways which vary from filter-based method, which does not take into account the decision classes to process and shorten the data, to wrapper methods which use decision class to process importance of features and embedded methods, which use classification methods to know which feature have what impact and yield classification ability as well.

The next section explains a famous and existing feature selection algorithm, viz. feature selection algorithm.

2. Quick Reduct Existing Algorithm

Let’s see what the existing famous Quick Reduct Algorithm is all about. Before that let us review what some important definitions are. The set of all features and the set of all tuples or objects defines an information system or knowledge base. Every attribute in the given knowledge base imposes an equivalence relation on U, the universe under consideration, which includes all tuples of data. These equivalence classes are set of all objects which are indistinguishable from each other in given knowledge, viz. attributes. Let P be a subset of features defining U then, the equivalence classes of P are called concepts, denoted by U/P. The equivalence classes of P are the collection of tuples that are similar under knowledge P.

In Rough Set theory a subset, say X, is represented by the pair of sets viz. Lower Approximation and Upper Approximation. Lower approximation of X is set of all elements which are contained in a partition of the Universe by the equivalence relation. Hence, lower approximation contains all elements which for sure belong to the set X. Reduct is the set of features which are all essential and these features are so that they are not fully dependent on each other. Thus, they are minimal independent set of features which are enough to define the space of all tuples with features. Typically, these include the core, the most essential part which is in each reduct and we must know reduct may not be unique for a data. The algorithm to find reduct using classical Rough Sets is as follows, as given in Fig 1. Here,

P is the dependency degree, that is how many elements of Universe are correctly classified given the attributes P defining it.

The algorithm proceeds as along as the dependency degree is increasing.

3. Soft Rough Sets

Why the need for soft sets to compute essential features in Quick Reduct? The answer is not in all problems we can get perfect equivalence relation to starting the task of determining the essential features. Hence Soft sets need to be studied. Soft Sets are defined as follows:

Definition. [Molodtsov 1999] A pair (F, E) is called a Soft Set over a universe under consideration U, if there is a mapping from the set E to P(U) the power set of U.

Definition. [Feng et al, 2011] Consider a Soft Set S = (F, A), where A is contained in E, over universe U of objects, then the pair P= (U, S) is called S-Soft Approximation Space.

Definition. [Feng et al, 2011] Let P be a S-Soft Approximation Space the Soft Rough lower Approximation of X w.r.t. P are defined as follows:

The three regions of Soft Rough Approximation Spaces are defined in a similar way as in rough Approximation Space, viz.,

4. Soft Rough Set based Quick Reduct

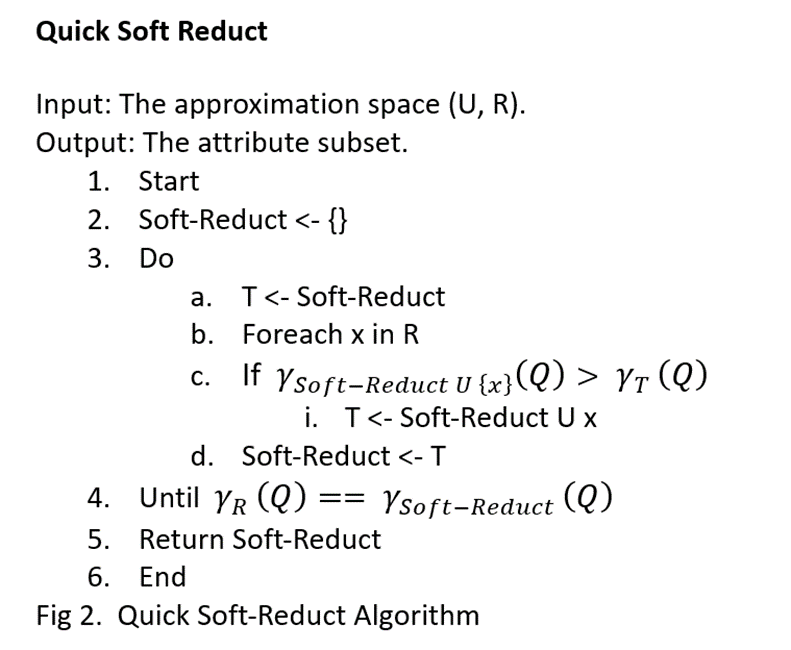

The Quick Reduct Algorithm based on the Soft Rough Set is given in Figure 2 below.

This algorithm can be studied and analyzed on key datasets on which the Quick Reduct algorithm has been analyzed. This is a research methodology where we may need to modify the algorithm while analyzing the outputs on the same datasets on which Quick Reduct was analyzed.

References

1. Pawlak, Z. (2012). Rough sets: Theoretical aspects of reasoning about data(Vol. 9). Springer Science & Business Media.

2. Tay, F. E., & Shen, L. (2002). Economic and financial prediction using rough sets model. European Journal of Operational Research, 141(3), 641–659.

3. Feng, F., Liu, X., Leoreanu-Fotea, V., & Jun, Y. B. (2011). Soft sets and soft rough sets. Information Sciences, 181(6), 1125–1137.

4. Molodtsov, D. (1999). Soft set theory — first results. Computers & Mathematics with Applications, 37(4–5), 19–31.